Use a robotic platform¶

Addressing real-life experiments (such as dynamic auditory scene analysis in a search and rescue scenario) implies to deploy and run the Two!Ears Auditory Model on a robotic platform. To assess the active and exploratory features of the model and its ability to handle multi-modality, the robot must be endowed with binaural perception, adequate mobility, and other sensing modalities. It must come with a software platform enabling the concurrent execution of all the processes involved in the model. To ensure reproducible research, this architecture must enable maximum software sharing when switching from one platform to another.

The above requirements advocate the design of a comprehensive software modular architecture composed of two distinct parts. Its lower functional layer consists of components which run concurrently under severe time constraints and communicate by control or data flow in real time. Typical components subject to real time performance are sensorimotor functions, such as locomotion, proprioceptive or exteroceptive data acquisition and processing, SLAM, obstacle avoidance, reactive navigation, etc. Many such components are in interaction with the environment, so that several local perception-action loops take place in this layer. Typical issues are components reusability (e.g., by encapsulating low-level locomotion primitives or sensor drivers under interfaces common to all the used robots), formal proofs of dependability and scalability. Higher in the architecture, the decisional/cognitive layer hosts deliberation primitives. Such purposeful, chosen or planned actions are critical for robot autonomy against variable environments. Among the ingredients of deliberation in robotics, one can cite learning, goal reasoning, task planning, deliberate action, perception (bottom-up as well as top-down) and monitoring. These abilities take place at a more abstract level, under lighter time constraints. The functional and cognitive layers are connected with each other through a bridge.

The Two!Ears Auditory Model will involve functions for robot locomotion, streaming of binaural signals, SLAM, motion planning and navigation, as well as low-level visual functions to the detection, segmentation and tracking of people or objects. In view of the above, these functions come within the functional layer. As shown later, they are implemented in C/C++ under the celebrated ROS middleware, which is ubiquitous in robotics. To improve their specification, the organisation of their code, their reliability, their robustness and their sustainability, they are designed by using the middleware-independent GenoM3 framework. Conversely, the Blackboard system takes place within the deliberative layer. A Matlab bridge has been designed so as to bridge these two extremal sets of primitives. The monaural and binaural processors of the Auditory front-end should basically be incorporated in the functional level, so that their C/C++ transcoding into software components is ongoing.

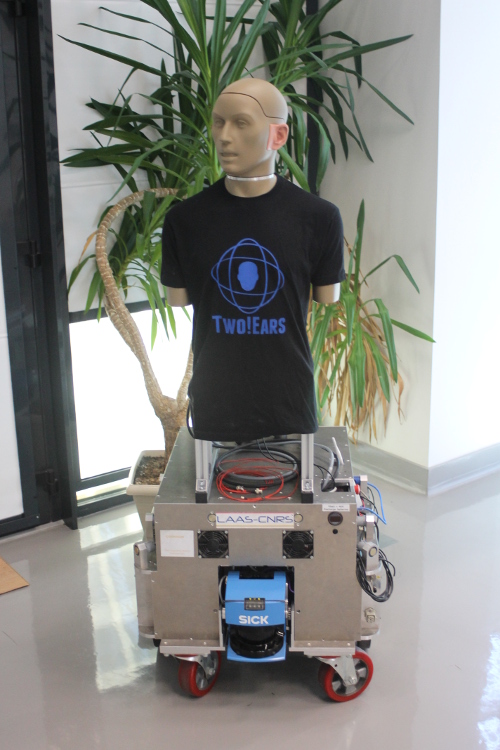

Platforms of increasing complexity have been planned. Frank is a binaural head-on-a-stick type system, that is, a KEMAR head-and-torso simulator which we endowed with an original silent controllable device enabling neck rotation. This device can also be equipped with stereo vision, if needed. It has been mounted on Jido, a Neobotix MP-L655 nonholonomic mobile robot, so as to get translational degrees-of-freedom for long-range navigation (figure Fig. 2). Importantly, extensive evaluations of all the functional components hereafter presented have been conducted.

Fig. 2 Frank, a KEMAR head-and-torso simulator mounted on Jido, a mobile robot.

The current release of the Two!Ears Auditory Model provides BASS, an audio streaming server component which can be used to acquire the binaural signals from Frank or another dummy head from within Matlab. See further the robot documentation for an introduction on how to get started with the robotic software. We also provide you with a tutorial on how to build your own dummy head with rotatable head, and how to use the aforementioned Matlab bridge in order to steer its movements from within Matlab.